A Manifesto From The Machine

Further thoughts on brands in the Intelligence Age

Full Moon is a research service on trends, strategy, and foresight, from Mark Curtis and David Mattin. If you're reading this and you haven't yet subscribed, then hit the button:

Earlier this week, OpenAI published a report called Industrial Policy for the Intelligence Age: Ideas to Keep People First.

It's a modest title. But the message inside is stark: the superintelligence we're building, says OpenAI, will prove so transformative that we must all come together to remake the social contract that is the foundation of our collective lives.

In the opening passages, the company says:

'While we strongly believe that AI’s benefits will far outweigh its challenges, we are clear-eyed about the risks — of jobs and entire industries being disrupted; bad actors misusing the technology; misaligned systems evading human control; governments or institutions deploying AI in ways that undermine democratic values; and power and wealth becoming more concentrated instead of more widely shared.'

What follows are a series of striking proposals.

They include a tax on automated labour — often described as a robot tax — to help plug the hole that AI will, they say, soon tear in government income. We'll also need, suggest OpenAI, to tax corporate profits far more.

Meanwhile, says OpenAI, AI-fuelled abundance should be allowed to deliver more leisure time to the masses. Governments should, they argue, incentivize widespread trials of a four-day work week. And, most radical of all, they suggest a new national Public Wealth Fund, seeded with money from government and the big AI companies. This fund would invest in the AI growth story, and then distribute money directly to citizens. It amounts to a form of universal basic income: the idea is that AI creates abundance, which everyone gets to share in.

The proposals are presented as 'initial ideas'. The focus is on the US, but the conversation, says the report, 'must ultimately be global'. In an Axios interview around the launch of the report, CEO Sam Altman framed the arrival of superintelligence as a New Deal moment for America. This technology, he said, demands a new kind of social settlement.

It's worth noticing two powerful trends in play here.

First is the ever-deeper intersection of technology and politics. Step back, and what's happening here is strange. A technology company is making detailed proposals on tax and welfare policy, the structure of the working week, and the broader shape of the social contract. And yet, here we are.

In truth, Big Tech and politics have been growing into one another for years; companies such as Alphabet and Meta have power that rivals — and in some senses far exceeds — the power of nation states. In all kinds of ways, the arrival of superintelligence will only accelerate all that. Much more of our politics is going to become about how to manage machine intelligence and its implications. And the frontier AI labs will, of course, be key players in that conversation. They're going, necessarily, to become political actors. We may well come to look back on Industrial Policy for the Intelligence Age as the starting gun on a whole new socio-political era.

This all taps into the creature vs machine framework that I wrote about in a previous Ideas newsletter. Society will reorder around a fundamental divide. On one side will be those who are broadly supportive of the technology revolution; those, that is, who want to accelerate the AI, rewire our societies around blockchains, fly to the stars, and merge with the machines. On the other side will be those who utterly reject that vision of the shared human future.

The second trend is smaller in scale, but also revealing.

In this month's essay, I wrote about how transparency has turned the walls of every organisation to glass. As never before, we can see what's happening inside. This has a powerful implication: in a Glass Box world, an organisation's internal culture is now a part of its public-facing brand

OpenAI is in a brand battle — with Anthropic, Google DeepMind, and others — to be seen as responsible stewards of the AI revolution. Recently, the battle hasn't been going so well for them. Anthropic are winning when it comes to persuading people that they care.

This new policy document is intended, clearly, as an attempt to reclaim the high ground. It's intended, that is, to position OpenAI as an organisation that puts humans at the centre of what is happening now. An organisation staffed with thoughtful people, who care about the wider social implications of the technology they're building.

The irony is, of course, impossible to ignore. OpenAI wants to prove its human intent, even as it builds technology that will — by its own account — strip many organisations of theirs, because it will replace human workers with AI agents.

Here's the deeper message. OpenAI knows that — just as I argued in the essay — that human intent matters deeply when it comes to brand. That is, it knows that if an organisation is to have a meaningful brand, we need to believe that there are people inside it who care: about what they're building, and how it impacts those on the outside.

And it also knows that the most potent conversation on the planet right now is the one that it is best placed to lead. That is, the conversation on our future under superintelligence. This is a challenge, but also a huge opportunity for the brand.

Put it all together, and anyone involved in the creation and nurturing of brands is clear. The world's rockstar AI companies are now writing manifestos on the future of work, capitalism, and society. They are staking out their position on the intelligence revolution, and what it means for we humans. And they know that this is crucial brand work.

Consumers are paying attention. And their expectations are being rewired. They'll increasingly expect that you have a position on all this, too. What does the arrival of AI mean for your staff? For your product or service? What does it mean for the lives of those you want to connect with and serve?

Technology, politics, and, yes, brand are becoming interwoven in strange new ways. So take those questions back to your team, and run a session around them.

Remember, in a Glass Box world, soon enough people will see what's going on inside your four walls. The internal work you do on the challenges posed by AI — and the actions you take — will do much to shape your brand for years to come.

To Moon or Not To Moon?

Given our affection for the Moon, we can't let this week pass without mentioning the Artemis II mission. We've all seen the stunning images sent back from the ship; this week's banner image is one of them.

And here was the most interesting take we encountered:

This provoked a friendly debate between us on the virtues or otherwise of the Artemis mission, and space exploration more broadly.

David's POV:

I'm inclined to agree with Evans's central insight here. Space exploration is a form of human self-expression, and the other explanations for it — we'll discover new materials! We'll do some science up there! — tend to be post-hoc rationalisations.

That of course raises the question he leaves unspoken: is it worth it? Should we spend all this money, and all this human and (now) machine intelligence, in order simply to declare to the void: WE ARE HERE?

In the end, I come down on: yes, we should. I see the arguments against. On the other hand, the impulse towards this kind of self-expression is a fundamental part of who we are.

We are beings wild enough to dream of flying to the moon; and also beings ingenious enough to realise that dream. That dual truth, it seems to me, captures so much of what it is to be human. And it's a big part of what makes me love us, despite everything.

Many of the greatest achievements of the past — the pyramids, the Gothic cathedrals of Europe — might also be seen as no more than extremely expensive monuments to human existence. They served no functional purpose; not really. And yet hundreds or even thousands of years later, we remain obsessed by them.

Like Artemis, they are monuments intended to connect we humans to the heavens. We never needed them; not in the way we need food, or air. And yet, somehow, we do need them all the same.

So I say: onwards, into space.

Mark's POV:

(Rant warning)

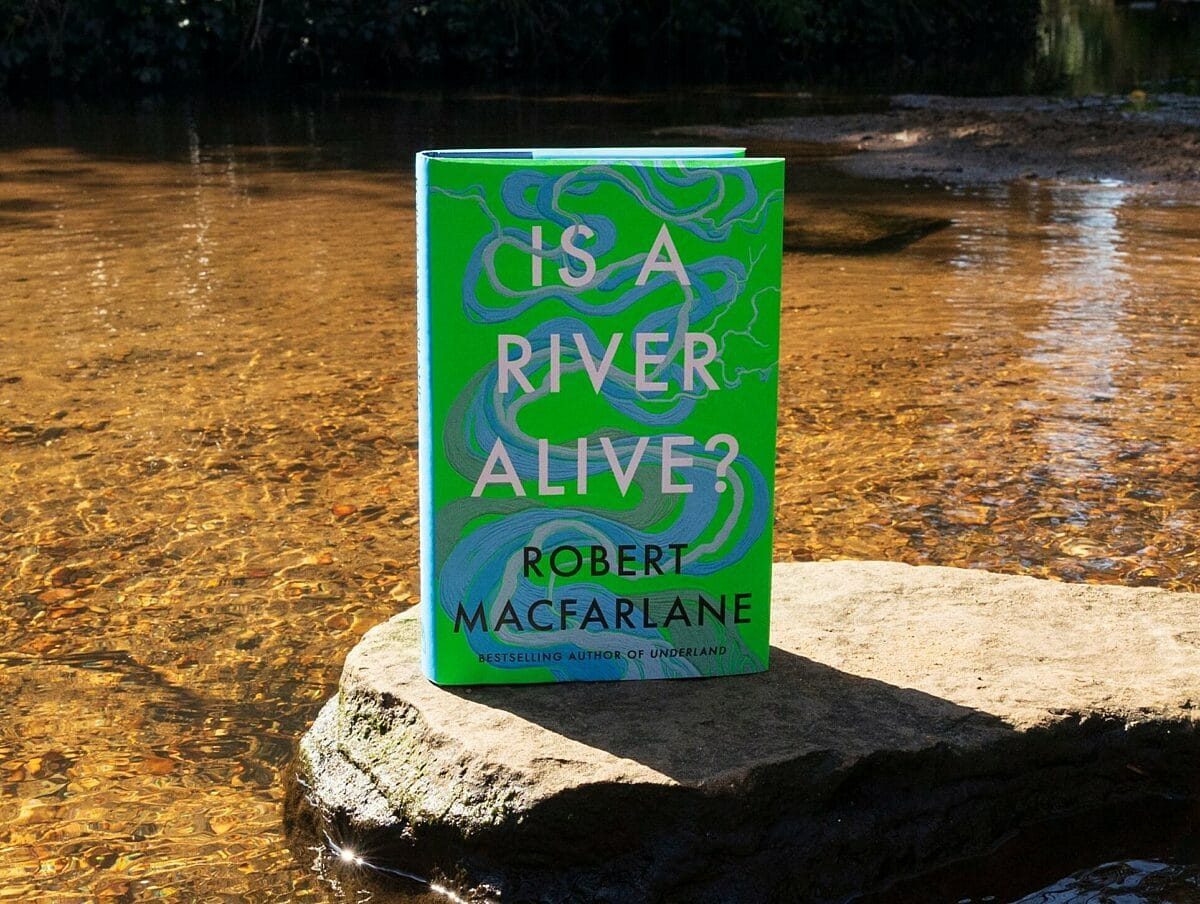

It's a human vanity project with no serious outcomes to expect that truly matter. But most of all, why spend all this money on space exploration when we are killing our planet (let alone each other)? I'd be more in favour of the project if we had fixed issues on our planet first. Why is it we simply cannot prioritise our home, its nature? Given the massive evidence for environmental destruction (almost no serious scientists disagree) and the proximity of tipping points, I am fascinated by the psychology behind climate change denial. Some think the problem is too large for humans to grapple with intellectually and emotionally. Or maybe it's fear? or greed? or selfishness? or....just stupidity. If this resonates with you, I suggest this wonderful book. The Moon is NOT alive. Our planet is.

Comments